Are we building responsible tech?

Google is being sued in the UK over the use of patient data. Facial recognition company Clearview AI is being sued in Europe for privacy violations. McDonald’s customers are suing the company for its new voice-recognition technology in the US for collecting data without consent. While legal issues are growing, ethical concerns — that might not even be within the purview of law — are also mounting.

On the other hand, artificial intelligence is growing to be insidious. Algorithms are having adverse effects on mental health — take Instagram, for instance. Researchers suggest that AI might cause significant damage by merely following human instructions. To say nothing of how AI is shaping hardware design too.

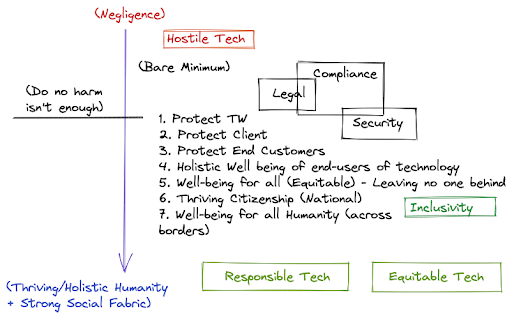

For a long time, technologists have followed the ‘do no harm’ principle to building software. On the spectrum of negligent to holistic, organizations have been content with protecting themselves and their clients. Even some of the progressive organizations often stop at protecting their end-users. As a result, hostile tech is omnipresent today.

‘Do no harm’ is no longer enough. As an industry, we need to do better. This article explores how the community can rethink software development practices to build inclusive, equitable and responsible tech.

Holistic wellbeing of end-users

As technology gets more and more pervasive in our everyday lives, the responsibility of making sure that it doesn’t affect the wellbeing of its users falls squarely on the shoulders of the software development and business community. This is not just their immediate experience while using the product, but also its downstream effects on the users’ physical, emotional, mental and social well being.

For example, while building habit-forming software for social media or gaming, developers need to reconsider product design, even if it means lesser revenue – instead of placing the burden of moderating their use on the users themselves. The cosmetics brand Lush recently announced that it was deactivating its Instagram, Facebook, TikTok and Snapchat accounts, in response to growing concerns about the platforms’ impact on mental health.

Wellbeing of all

While building software, we only look at the core users of the systems we design. User-centered design, by definition, creates personas of who we think are our user groups. This approach often overlooks the impact of these products on those we don’t actively seek to serve. As a result, software can have unintended and horrifying consequences.

For example, earlier this year, Cameroon saw a wave of arrests and abuse against LGBTQ+ people, mostly at events organized by HIV associations. Now imagine the unintended harm that can be caused by a medical system that tags the HIV status of patients, even if it is intended to inform healthcare workers who monitor the patients’ health.

Thriving citizenship

Social tools today have a disproportionate impact on how we perceive the world. Technology mediates not only our conversations but also much of our consciousness. Fake news and misinformation can have grave consequences. Anti-vaccine propaganda, for instance, has the ability to affect global health, not just the individual declining vaccination.

So, technologists must proactively identify risks to society and build digital tools that facilitate citizenship through transparent and flexible interactions. Tattle, a suite of products aimed at building a healthier online information ecosystem in India, is a great example of the possibilities.

Wellbeing for all humanity across borders

Technology, even when used within a country’s borders, can have a global impact. For example, it might create a negative environmental footprint or include work from international partners who don’t uphold labour standards. We should be careful of such downstream effects, stopping them from snowballing over time.

Technologists, for a long time, have lived in cocoons of their own making. Setting myopic goals like, ‘increase customer time on the site’ or ‘improve stickiness on the app’ have led us to create hostile tech that causes more harm than we intended or foresaw.

Reversing that requires building a movement that keeps responsible tech at the core of what we do. Going beyond building software, the community needs to work towards changing the industry’s attitudes to building technology. We need to spark a movement of technologists who deeply care about responsibility and ethics in tech.